I’ve seen it in code reviews. And you probably too. A PR changes a button’s color, and suddenly 47 snapshot tests fail. The author updates them all with --updateSnapshot, the reviewer skims past 200 lines of .snap file changes, and everyone moves on. No one actually verified anything.

This is snapshot testing’s dirty secret: it feels like testing without actually being it.

What Snapshot Tests Actually Assert

I know, most developers probably like snapshot testing. It’s easy. And it takes away all the effort to write tests. But if that’s what you’re looking for you could just as well skip tests as a whole and “safe” yourself the work.

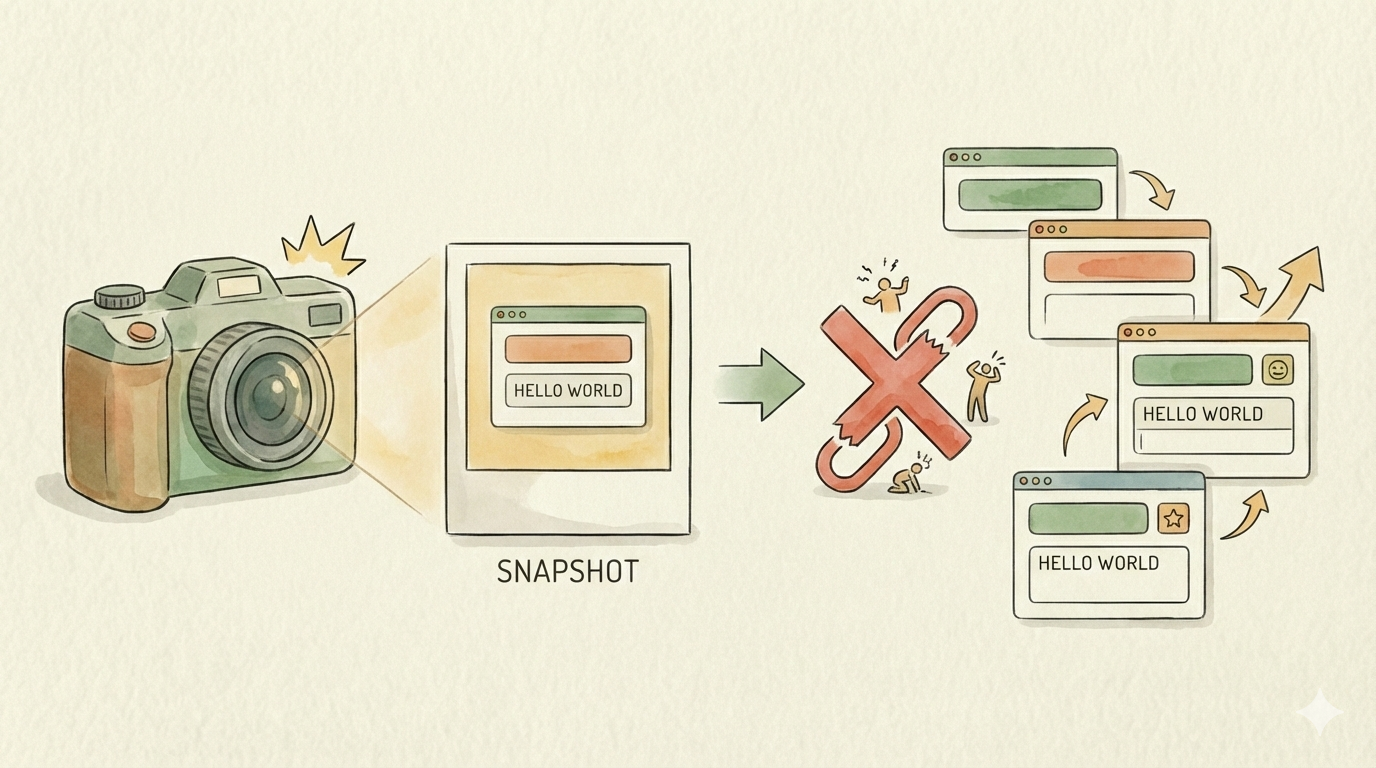

Because when you write expect(component).toMatchSnapshot(), you’re not asserting that your component behaves correctly. You’re asserting that it hasn’t changed since the last time someone ran the tests. That’s a fundamentally different thing.

A unit test encodes developer intent: “when the user clicks submit, the form should validate inputs.” A snapshot test encodes nothing—it just says “the output should look exactly like it did before.” This distinction matters more than most teams realize.

If your component had a bug when the snapshot was first created, the snapshot happily locks that bug in. If a colleague updates the snapshot without understanding what changed, the snapshot happily locks that in too.

The Update Reflex

Here’s where theory meets reality. On a big project, a single refactor—renaming a CSS class, updating a shared component’s props, changing a translation string—can cascade into dozens of failing snapshots across the codebase.

What happens next is predictable: developers run jest --updateSnapshot and move on. They’ve been trained to do this because most snapshot failures aren’t real bugs. They’re noise. And when your signal-to-noise ratio is terrible, people stop paying attention.

This creates an ironic situation: the test that was supposed to catch unintended changes becomes the test that everyone blindly updates. You’ve spent engineering effort maintaining test infrastructure that actively erodes trust in your test suite.

The Code Review Problem

Snapshot diffs are painful to review. A 500-line .snap file change tells you almost nothing at a glance. Did the DOM structure change intentionally? Is that missing aria-label a bug or a deliberate cleanup? The reviewer has to mentally reconstruct what the component should look like — which is exactly the work that explicit assertions would have done for them.

Teams that take snapshots seriously treat them as code artifacts that deserve careful review. In practice, most teams scroll past them.

When They Actually Hurt

Beyond wasted effort, snapshot tests create real costs:

They discourage refactoring. A developer new to the codebase sees that renaming a CSS class breaks 30 tests. They either skip the cleanup or spend an hour updating snapshots they don’t fully understand—introducing risk either way.

They inflate coverage metrics. A single toMatchSnapshot() call can touch dozens of code paths without verifying any of them behave correctly. Your coverage report says 90%, but your actual confidence should be much lower.

They’re fragile across environments. Translations change, date formatting varies by locale, third-party component libraries update their markup. None of these are bugs in your code, but they all break your snapshots.

Where Snapshots Do Work

I’m not saying snapshots are universally useless. They work well in narrow scenarios:

Serialized data transformations. If you’re testing a Babel plugin or a code formatter, snapshot tests shine. The input-output relationship is clear, the snapshots are small and readable, and changes are genuinely meaningful.

Pure presentational components with no logic. If a component takes props and renders markup with zero business logic, a small, focused snapshot can catch unintended visual regressions—especially when paired with shallow rendering.

Final-output artifacts like PDFs. When your system generates invoices, contracts, or reports, “the output should look exactly like this” is the requirement — not a proxy for some deeper behavior. PDF generation is typically deterministic, each test maps to one document type, and a snapshot failure is far more likely to signal a real problem than noise. Visual snapshot diffing (rendering pages to images and comparing them) works better than byte-level comparison here, since PDF metadata and font subsetting can vary between runs.

Change detection (not correctness). Some teams use snapshots deliberately as a “what changed” radar during development, not as a correctness gate. That’s a valid workflow, as long as the team understands the distinction.

What To Do Instead

For most component testing, replace snapshots with explicit assertions:

// Instead of: expect(component).toMatchSnapshot()

// Assert specific behavior:

expect(screen.getByRole('button')).toHaveTextContent('Submit');

expect(screen.getByLabelText('Email')).toBeRequired();

expect(screen.queryByText('Error')).not.toBeInTheDocument();

Each assertion documents intent. When a test fails, you know exactly what broke and why. The test name tells you what should happen; the assertion tells you what actually happened. That’s a test you can trust.

For visual regressions, consider dedicated visual testing tools that compare screenshots with perceptual diffing—they catch what actually matters (how things look) without breaking on every DOM change.

The Takeaway

Snapshot testing optimizes for ease of writing tests at the expense of everything else: readability, maintainability, signal quality, and actual confidence in your code. If your test suite is full of snapshots, you likely have high coverage numbers and low real confidence — the worst combination.

Write fewer tests that assert specific behavior. Your future self (and your teammates) will thank you.